In the last few years, rasters presented as Cloud-Optimized GeoTIFFs (COGs) have gained a lot of excitement in the field. A COG is a geoTIFF (.tif) hosted on a cloud or file server such as AWS S3, Microsoft Azure, or Apache, whose format is optimized for remote reads. While there’s an increasing body of knowledge and use cases, COGs are still shy of mainstream. In a recent project, we found it helpful to structure our raster data as COGs.

Data for the bees

This month we released Beescape, a web app that helps beekeepers select and manage their bee colonies. Built for a collective of researchers headed by Penn State University, the app scores the suitability of locations for bee colonies by querying rasters which store data on factors like pesticide usage and flower supply. For example, a blueberry farm in summer would have a high floral score like 90 whereas a big parking lot would have 0. Searching a location should return scores for all landscape indicators.

Each click on the map results in queries that gather data from a few different rasters, and the project has dozens of different raster data sources in total. Furthermore, the rasters are cut up by US state.

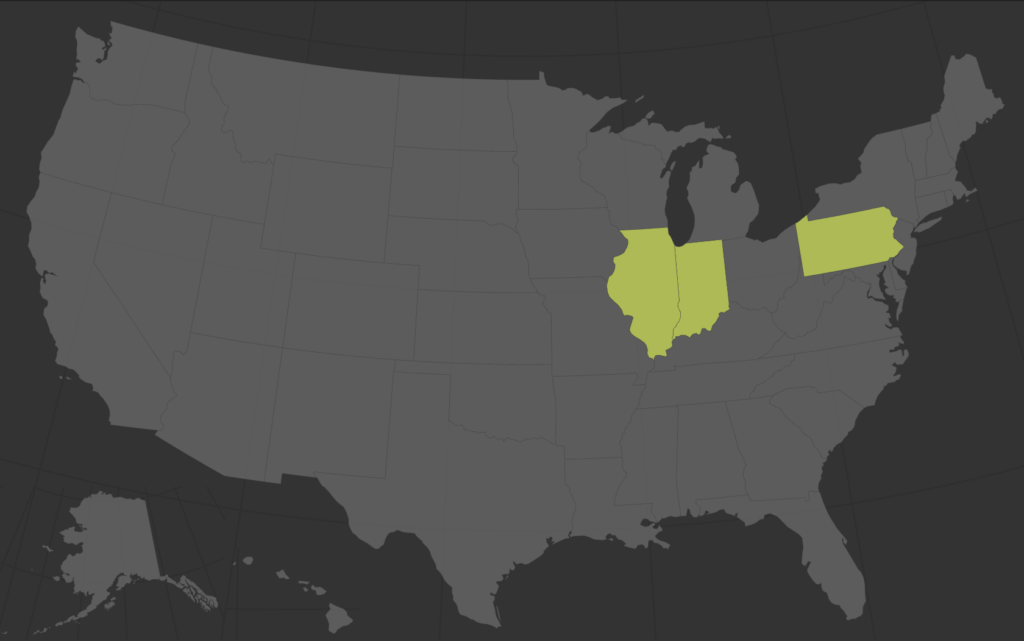

Currently we have data for the non-contiguous states Illinois, Indiana, and Pennsylvania, with the potential for the client to fill in more states over time.

COGs as a natural next step

The project has several megabytes of rasters whose data is needed only when and where the user clicks the map. We never need to visualize the raster to the user, thusly don’t need to render any map tiles. How do we process and provide the data to the app? Let’s think through some options.

A straightforward option would be to include the raster files on the app server alongside the project code. The back-end code would access the rasters directly from disk. In this case, the up-front savings introduces long-term hassle because the project code would balloon in size and necessitate a full project redeploy with any data change.

This has been our usual method. In the last few years, GDAL gained the ability to perform range reads over HTTP requests of remotely hosted rasters. New virtual file systems are being added over time, and in 2015 GDAL added AWS S3 access. Remote raster reads gave way to COGs, and introduced new options to app architecture.

While hosting rasters on our app server is a functioning solution, COGs allow the same data to be hosted independently of the app, with a few data optimizations. As mentioned, COGs have their own data format spec. The spec suggests optimizations that solve the unique demands of remote range requests for a raster. The main data organization techniques are compression, tiling and overviews. The trick is, a geoTIFF is not required to have all these optimizations to be a functioning COG, though they can add significant speed to reads.

Creating a COG

Overviews come into play when the client wants to render a quick image of the whole file – it doesn’t have to download every pixel, it can just request the much smaller, already created, overview.

COG documentation, https://www.cogeo.org/in-depth.html

Tiles come into play when some small portion of the overall file needs to be processed or visualized.

In our case, it made sense to internally tile the rasters, but not create overviews. Internal tiling makes x, y coordinate lookup more efficient. On the other hand, generating overviews would resample our data in a way that gives us less accurate reads. The COG spec is a little open-ended, so choose your optimizations as needed. See GDAL documentation for robust examples on converting and optimizing a raster to a COG.

gdal_translate in.tif out.tif -co TILED=YES -co COPY_SRC_OVERVIEWS=YES -co COMPRESS=LZW<br>

We uploaded our final geoTIFFs to AWS’s S3 and query directly from the bees app backend. The speed of an HTTP request is subject to that of AWS services communicating with one another, likely slower compared to direct in-app access. From implementation and testing, average response time is a few seconds or less. This is a fast enough request/response time for our use case. Prices for storing data and handling HTTP requests on S3 are extremely low; for our expected usage, we expect to pay pennies per month.

Reading a COG

Beescape has a python backend, so we use Rasterio to read in data from our COGs. Rasterio is built upon GDAL, which uses the vsis3 and vsicurl file system handlers to perform HTTP reads of files in S3 buckets. As in our code, equip the environment with S3 credentials for Rasterio to read rasters from your buckets. Be sure the spatial reference system of your input coordinates and rasters match.

def sample_at_point(geom, raster_path):

with gdal_configured_credentials():

try:

with rasterio.open(raster_path, mode='r') as src:

# Reproject latlon to coords in the same SRS as the

# target raster

to_proj = Proj(src.crs)

x, y = to_proj(geom['lng'], geom['lat'])

# Sample the raster at the given coordinates

value_gen = src.sample([(x, y)], indexes=[1])

value = value_gen.next().item(0)

except rasterio.RasterioIOError as e:

return ({

"data": None,

"error": e.message

})

return ({

"data": value,

"error": None

})At this point, we have created a COG and we’re using it. Great! Only one issue remains. As mentioned, we have dozens of rasters for a smattering of non-contiguous states. How does one reliably and programmatically select only the desired raster(s) given an x, y coordinate? How do we target a query at the correct subset of rasters?

Virtual Rasters

A Virtual Raster (VRT) is a list of rasters and their geographic metadata that acts like a raster telephone book. The VRT routes a request at an x, y coordinate to the correct file in its catalog of rasters, returning data at said point else a no-data value for gaps in the datasets. Typically the VRT file is placed in the same directory as its rasters. Generate a VRT with GDAL on the command line, see below, or with a GDAL plugin in QGIS.

There’s many CLI options, but most simply:

gdalbuildvrt <destination_vrt_name> <file1> <file2>Our code looks something like:

gdalbuildvrt pesticide_3km.vrt PA_pesticide_3km.tif IL_pesticide_3km.tifConclusion

COGs can have a spectrum of implementations. Our use case may not be a “true” COG, as it does not have all optimization methods applied, however it is the right fit for Beescape’s architecture. Moving data outside the app gives the project flexibility, all thanks to recent innovations by GDAL around remote reads and virtual file systems.

Shortly after implementation, data for Indiana was added to Beescape. By using COGs, it was seamless to integrate new data. The client dropped the new tiled geoTIFFs in S3, and on our end we updated the VRTs with the GDAL command. No app bundle changes, no redeploys. As a bonus safety measure, the app is protected against any mistakes made by the client during data upload as it wouldn’t register until the new VRT is generated. Beescape is live! Check it out, and tell your beekeeper friends!