What is ChatGSFC?

We’ve spent the past year helping NASA build and scale a chat-based AI tool called ChatGSFC. Along the way we’ve learned some things about composable tooling, open-source strategy, user empowerment, and community building. Over 7,000 people across NASA use ChatGSFC to support their work. This includes everything from risk analysis and mission planning to generating robot instructions for constructing structures from lunar regolith. It’s built on LibreChat, an open-source AI platform, and started with a NASA engineer named Mike Biskach, who set up the initial deployment of ChatGSFC. We brought our AI expertise and operational capabilities to help move it into production and scale it to the NASA workforce under the direction of NASA Goddard Space Flight Center (GSFC) Chief AI Officer (CAIO), Matt Dosberg.

While ChatGSFC is available exclusively inside NASA, the approaches we used and the lessons we’ve learned along the way are worth sharing more broadly. We want to share what we’ve learned with others who may be at a different point on their own journey and to make the case that building on open-source AI tools is a strategy that works.

What Makes ChatGSFC Interesting: Small, Composable Parts

The core design philosophy behind ChatGSFC is about putting powerful, composable tools in the hands of users. Instead of a rigid system that dictates how you work, it provides building blocks that users control and assemble to fit their needs. And, instead of building a custom application for every new use case, the approach invests in general-purpose capabilities that users can adapt on their own, reducing the need for custom builds over time.

The composable parts include:

- Access to a diverse pool of Large Language Models (LLMs) from multiple vendors.

- File understanding across many formats.

- A personal database with user managed tables for structured data exploration.

- User-created agents with customizable system prompts, models, and tool access.

- An Agent Marketplace that provides a place for users to share and find agents shared by others.

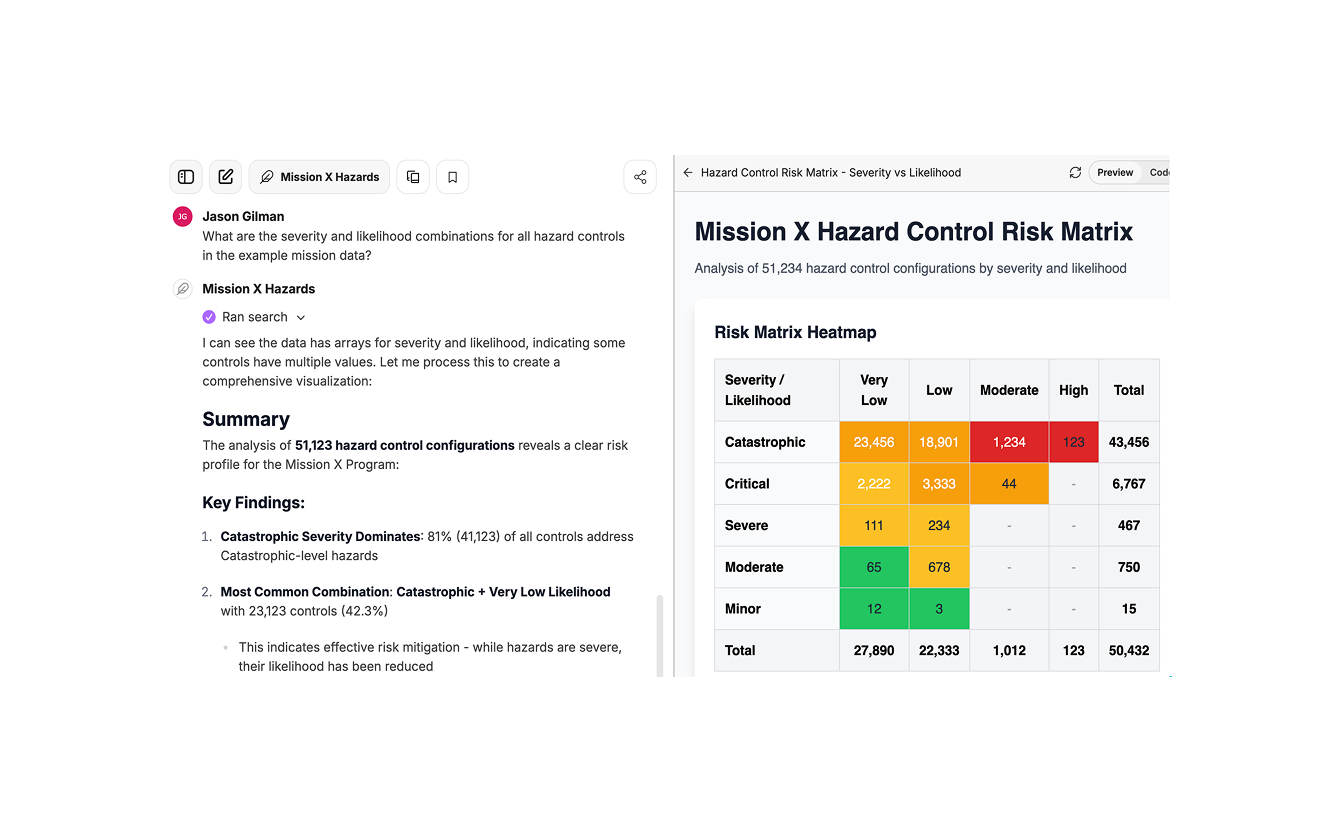

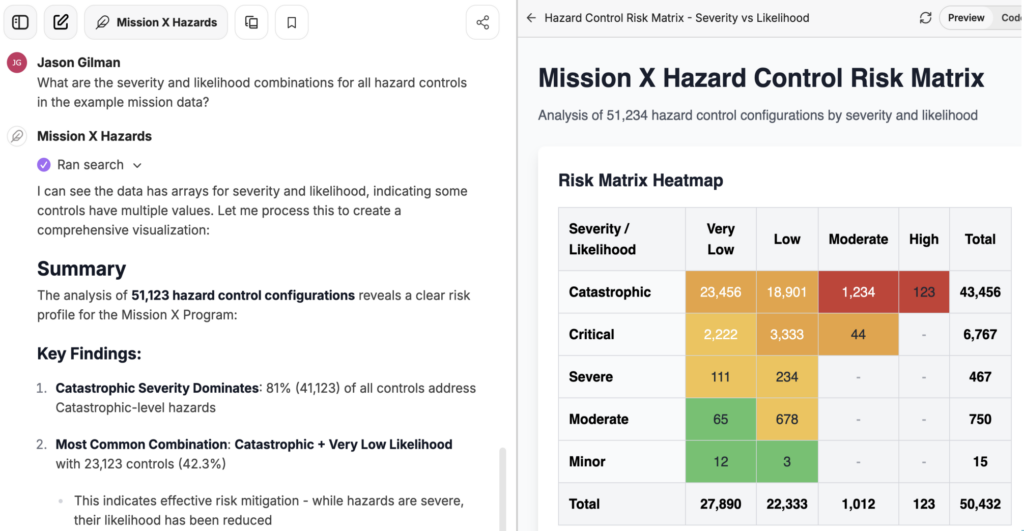

- Generative UI, via Artifacts, which allows the LLM to generate web applications that run right in ChatGSFC to display interactive graphics, reports, and information.

- MCP (Model Context Protocol) as the extensibility mechanism — connecting to other NASA systems and adding new capabilities over time.

If it helps to think in metaphors: this is like Lego, not a pre-assembled toy. It’s open-ended building blocks, not a single-purpose product that dictates how you use it.

This stands in contrast to a pattern common in the industry, where teams tell users, “tell us what you need and we’ll build the agentic system for you,” or where users are limited out of concern they won’t know what to do.

At the same time, composable tools don’t eliminate the need for expertise. They shift where it’s applied. Giving users powerful building blocks means they can build things that don’t work well if they don’t understand the fundamentals. A poorly written system prompt can produce an agent that sounds confident while giving inaccurate answers. A workflow that pulls in too much data without the right constraints can quietly degrade in quality. Users can know enough to build something that looks right without knowing enough to recognize when it isn’t. This is the tension that we actively work to address. We address this in several ways:

- Designing tools with guardrails built in. Where we can, we design tools to steer users away from common failure modes so that the defaults are safe even when users are experimenting.

- Regular training sessions. We run frequent sessions that cover not just how to use ChatGSFC, but how LLMs behave, their limitations, where they tend to fail, and best practices for getting reliable results.

- Community forums. Users share problems, solutions, and techniques with each other. Some of the best practical guidance comes from experienced users helping newer ones.

- Direct consulting. For teams tackling more complex use cases, we work with them directly to advise on approach, review their agent designs, and help them avoid pitfalls.

Empowering users doesn’t mean leaving them on their own. The composable-tools approach works because there’s a support structure around it.

What We’ve Seen: Use Cases and Surprises

The everyday use cases are what you’d expect: asking general LLM questions, understanding documents, drafting and editing text. But the more specialized use cases, like risk analysis, structured data exploration, and spacecraft operations analysis, are where things get interesting.

The real surprise has been how resourceful users are. They build their own agents and compose tools in ways we didn’t anticipate. I’m constantly learning new ways to use a tool that I help maintain, taught by the people using it.

This is how the composable-tools approach proved itself. We didn’t predict many of these use cases. Giving people the building blocks unlocked workflows we never would have thought to build ourselves.

Why Open-Source AI Tooling Matters

Element 84 and NASA both have deep roots in geospatial technology, a domain that has long thrived on open-source projects like STAC (SpatioTemporal Asset Catalog) and GDAL (Geospatial Data Abstraction Library). The same principles that make open-source valuable there — the ability to inspect, modify, and enhance your tools, and freedom from vendor lock-in — apply to AI tooling.

NASA investing in open-source AI tooling aligns with the agency’s long history of openness and accelerates capability across the organization. For a science and engineering agency, reproducibility and transparency are requirements. AI systems are already prone to being black boxes due to the inherent complexity of neural networks where knowledge is distributed across billions of parameters that resist human interpretation, so the tooling around them shouldn’t add more opacity. Open source gives you control over where data is stored, how requests are processed, and which models are used.

There’s also a compounding effect at work. AI now makes open-source software easier to understand and modify than ever before. You can point an LLM at LibreChat’s codebase to understand how a feature works or plan a modification. That’s not possible with closed-source tools, and this increased access lowers the barrier to contribution and adaptation in a meaningful way.

Lessons Learned

A few things we’ve taken away from this work so far:

- You don’t have to build everything for everyone. Not every use case requires a new project. Composable tools let users meet their own needs in ways you can’t always anticipate.

- Community building is essential. Regular meetings, internal champions, and user showcases have been key to adoption. People need to see what’s possible, and they need a place to ask questions and share what they’ve figured out.

- Be responsive to user problems. This matters more than almost anything else for sustaining trust and adoption.

- Lower barriers to access where possible. Making it easy for users to get started without unnecessary friction communicates trust and drives engagement.

- Open source reduces risk. Building on open-source tools avoids vendor lock-in and gives you the ability to adapt when your needs change. MCP in particular has been a strong extensibility mechanism. It lets us add functionality that works with ChatGSFC today and remains portable if the landscape shifts.

- Know when to customize and when not to. Working with an open source project means constantly making judgment calls about when to extend the platform and when to contribute upstream or stay close to the default. Getting that balance right takes discipline.

- Focus on maintaining high quality. Users will tolerate rough edges early on, but sustained adoption depends on the tool being reliable and well-maintained.

What’s Next for ChatGSFC

We’re looking at many different opportunities to improve ChatGSFC for users. This includes adding connections to other NASA systems for better access to information, improved document intelligence where users need the ability to extract and iterate on parts of Word and PDF documents, and improving conversation context management.

MCP is the primary growth path for ChatGSFC extension. New tool integrations and new connections to NASA systems will continue to expand what users can do without requiring new custom builds.

One area we’re particularly interested in is dynamic user interfaces. Dynamic interfaces can be built on the fly by AI, tailored to individual users and their specific tasks. The Artifacts feature is an early version of this with things like MCP Apps offering other intriguing options for composability.

A Personal Note

In some ways, this is the dream job for me. I’ve spent a long time thinking about how to build tools for builders, which is a throughline in my career. ChatGSFC is a realization of that interest. It’s about helping the people who do NASA’s work accomplish more, get unstuck, and move faster. Getting exposed to the variety of missions and challenges at NASA through this work is genuinely exciting.

We’re looking forward to continuing our work with ChatGSFC, and with composable AI tools more generally. If you’d like to continue the conversation surrounding these ideas, we’d love to hear from you!В